标签:

scrapy爬虫还是很简单的,主要是三部分:spider,item,pipeline

其中后面两个也是通用套路,需要详细解析的也就是spider。

具体如下:

在网上找了几个汽车网站,后来敲定,以易车网作为爬取站点

原因在于,其数据源实在是太方便了。

看这个页面,左边按照品牌排序,搜索子品牌,再挨个查看信息即可

按照通常的思路,是需要手动解析左边这列表

找出每个品牌的链接页面

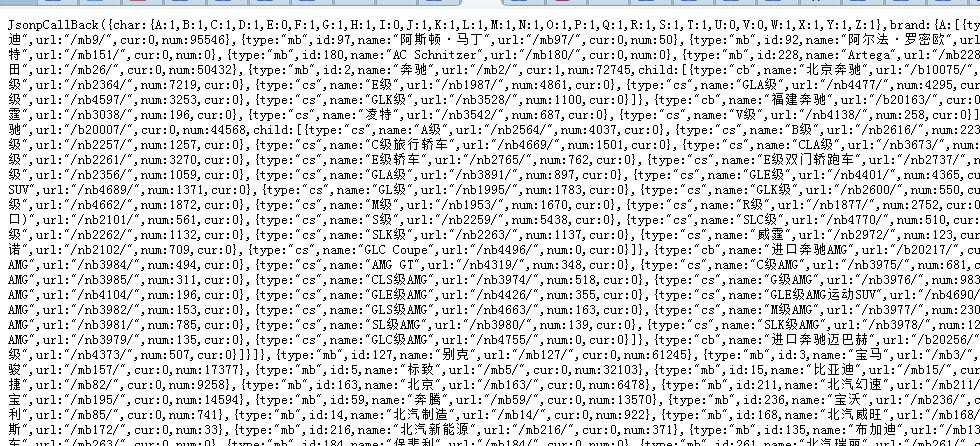

结果分析源码发现,网站直接通过js生成的导航栏,直接通过这个链接生成的json即可获得所有的信息

http://api.car.bitauto.com/CarInfo/getlefttreejson.ashx?tagtype=baojia&pagetype=masterbrand&objid=2

直接解析其中需要的数据即可

如下图

可以用json解析,我没尝试,我采用最简单的正则匹配提取

代码很简单

json_str = """

上面全部的数据

JsonpCallBack({char:{A:1,B:1,C:1,D:1,E:0,F:1,G:1,H:1,I:0,J:1,K:1,L:1,M:1,

N:1,O:1,P:1,Q:1,R:1,S:1,T:1,U:0,V:0,W:1,X:1,Y:1,Z:1}

,brand:{A:[{type:"mb",id:9,name:"奥迪",url:"/mb9/",cur:0,num:95546}

~~~~~~~~~~太长 剩余的代码中我省略了

"""

import re

result = re.findall(r‘\/mb\d+\/‘, json_str)

print result

#mb_pages = [‘/mb9/‘, ‘/mb97/‘, ] #192条

所以,品牌页的代码:

def parse(self, response):

page_root = ‘price.bitauto.com‘ #response.url.split(‘/‘)[2] #‘price.bitauto.com‘

mb_pages = [‘/mb2/‘, ‘/mb3/‘,‘/mb9/‘,

] #/mb9/audi, /mb2/benz, /mb3/bmw

for info in mb_pages:

page_href = info

page_url = ‘http://‘+ page_root + page_href

yield scrapy.Request(url=page_url, meta={‘treeurl‘: info}, callback=self.parse_brand_page)

品牌页面下面的子品牌

元素定位,爬取,代码如下

def parse_brand_page(self,response):

#命令行测试 scrapy shell http://price.bitauto.com/mb196/

page_xpath = ‘//div[@id="c_result"]/div[@class="carpic_list"]/ul/li‘

page_root = ‘price.bitauto.com‘ #response.url.split(‘/‘)[2] #‘price.bitauto.com‘

treeurl = response.meta[‘treeurl‘]

brand = response.xpath(‘//div[@class="tree_navigate"]/div/strong/text()‘).extract()[0]

for info in response.xpath(page_xpath):

page_href = info.xpath(‘a/attribute::href‘).extract()[0]

page_url = ‘http://‘+ page_root + page_href

#print page_url

yield scrapy.Request(url=page_url, meta={‘treeurl‘: treeurl, ‘brand‘: brand}, callback=self.parse_car_page)

到款型详情页,然而,需要的是参数页,,继续request跳转

def parse_car_page(self,response):

peizhi_xpath = ‘//a[@id="linkOutCs"]/@href‘

page_url = response.xpath(peizhi_xpath).extract()[0]

treeurl = response.meta[‘treeurl‘]

brand = response.meta[‘brand‘]

yield scrapy.Request(url=page_url, meta={‘treeurl‘: treeurl, ‘brand‘: brand}, callback=self.parse_detail_page)

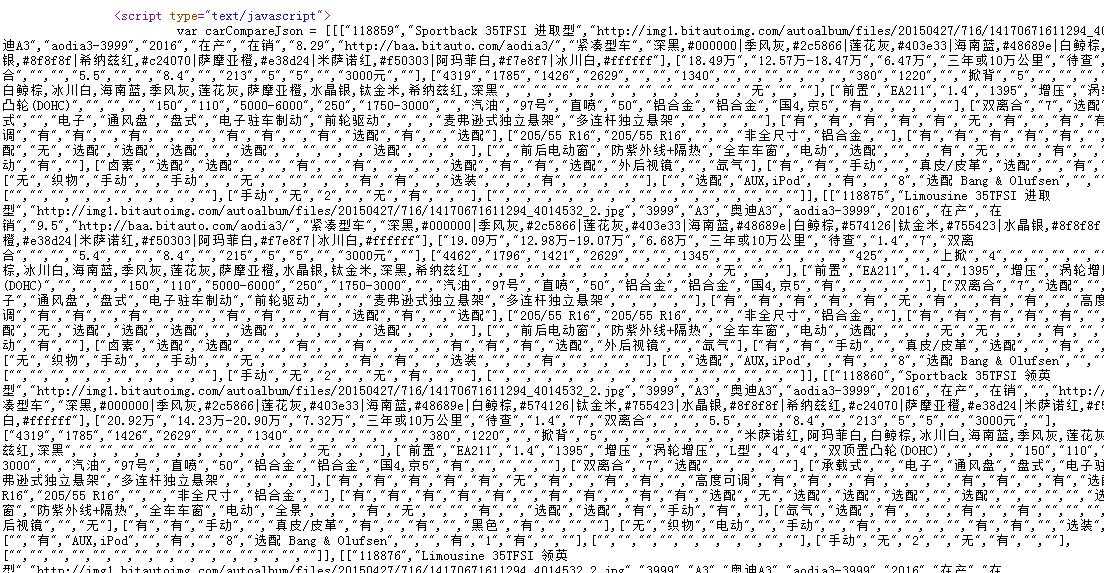

最后到参数页,这才是我们需要的数据啊

分析源代码发现,所有的数据依旧是在js代码以字符串形式存在的,这种代码,正是正则的用武之地啊

数据字符串是三个[[[]]]嵌套的字符串,处理方式为

ff = re.search(‘\[\[\[.*\]\]\]‘,response.body).group() #str

infos = eval(ff)

ff获得的是整个[[[]]]的内容,然后用eval转化成python的值,然后再用循环取对应位置的数据即可。代码如下

def parse_detail_page(self,response):

#命令行测试 scrapy shell http://car.bitauto.com/changchengh5/peizhi/

ff = re.search(‘\[\[\[.*\]\]\]‘,response.body).group() #str

infos = eval(ff)

for s_second in infos:

item = BitautoCarItem()

item[‘carid‘] =s_second[0][0] #"117388"

item[‘url‘] = response.url

item[‘brand‘] = response.meta[‘brand‘] ###

item[‘treeurl‘] = response.meta[‘treeurl‘] ###

item[‘brandurl‘] = s_second[0][6] ##changchengh5,benchieji

item[‘brandmodel4‘] = s_second[0][4] #"哈弗H5" "奔驰E级"

item[‘brandmodel5‘] = s_second[0][5] ###

item[‘version‘] = s_second[0][1] #"经典版 2.0T 手动 两驱 精英型",

item[‘image‘] = s_second[0][2]

item[‘cyear‘] = s_second[0][7]

item[‘ctype‘] = s_second[0][12] #"SUV"

item[‘color‘] = s_second[0][13]

item[‘price1‘] = s_second[1][0] # 厂家指导价

item[‘price2‘] = s_second[1][1] # 商家报价

item[‘displacement‘] = s_second[1][5] #"2.0", 排量(L)

item[‘shiftgears‘] = s_second[1][6] # "6"

item[‘shifttype‘] = s_second[1][7] # "手动"

item[‘clength‘] = s_second[2][0] # 长宽高,为了清楚表示,加了前缀c

item[‘cwidth‘] = s_second[2][1] # 长宽高,为了清楚表示,加了前缀c

item[‘cheight‘] = s_second[2][2] # 长宽高,为了清楚表示,加了前缀c

item[‘wheelbase‘] = s_second[2][3] #轴距

item[‘mingrounddistance‘] = s_second[2][8] #最小离地间隙

item[‘motor‘] = s_second[3][1] # 发动机型号

item[‘intaketype‘] = s_second[3][5] # 进气形式

item[‘maxhorsepower‘] = s_second[3][13] # 最大马力(Ps)

item[‘maxpower‘] = s_second[3][14] # 最大功率(kW)

item[‘maxrpm‘] = s_second[3][15] # 最大功率转速(rpm)

item[‘oiltype‘] = s_second[3][19] # 燃料类型

item[‘oilsupply‘] = s_second[3][21] # 供油方式

item[‘tankvolume‘] = s_second[3][22] # 燃油箱容积(L)

item[‘drivetype‘] = s_second[5][6] # 驱动方式

item[‘braketype‘] = s_second[5][5] # 驻车制动类型

item[‘frontwheel‘] = s_second[7][0] # 前轮

item[‘backwheel‘] = s_second[7][1] # 后轮

yield item

以上,整个爬取代码,为:

#!/usr/bin/env python

# coding=utf-8

import scrapy

import re

from Car_spider.items import BitautoCarItem

class BitautoSpider(scrapy.Spider):

name = ‘bitauto‘

allowed_domains = [‘bitauto.com‘]

start_urls = [‘http://price.bitauto.com/mb2/‘,]

def parse(self, response):

page_root = ‘price.bitauto.com‘ #response.url.split(‘/‘)[2] #‘price.bitauto.com‘

mb_pages = [‘/mb2/‘, ‘/mb3/‘,‘/mb9/‘,

] #/mb9/audi, /mb2/benz, /mb3/bmw

for info in mb_pages:

page_href = info

page_url = ‘http://‘+ page_root + page_href

yield scrapy.Request(url=page_url, meta={‘treeurl‘: info}, callback=self.parse_brand_page)

def parse_brand_page(self,response):

#命令行测试 scrapy shell http://price.bitauto.com/mb196/

page_xpath = ‘//div[@id="c_result"]/div[@class="carpic_list"]/ul/li‘

page_root = ‘price.bitauto.com‘ #response.url.split(‘/‘)[2] #‘price.bitauto.com‘

treeurl = response.meta[‘treeurl‘]

brand = response.xpath(‘//div[@class="tree_navigate"]/div/strong/text()‘).extract()[0]

for info in response.xpath(page_xpath):

page_href = info.xpath(‘a/attribute::href‘).extract()[0]

page_url = ‘http://‘+ page_root + page_href

#print page_url

yield scrapy.Request(url=page_url, meta={‘treeurl‘: treeurl, ‘brand‘: brand}, callback=self.parse_car_page)

def parse_car_page(self,response):

peizhi_xpath = ‘//a[@id="linkOutCs"]/@href‘

page_url = response.xpath(peizhi_xpath).extract()[0]

treeurl = response.meta[‘treeurl‘]

brand = response.meta[‘brand‘]

yield scrapy.Request(url=page_url, meta={‘treeurl‘: treeurl, ‘brand‘: brand}, callback=self.parse_detail_page)

def parse_detail_page(self,response):

#命令行测试 scrapy shell http://car.bitauto.com/changchengh5/peizhi/

ff = re.search(‘\[\[\[.*\]\]\]‘,response.body).group() #str

infos = eval(ff)

for s_second in infos:

item = BitautoCarItem()

item[‘carid‘] =s_second[0][0] #"117388"

item[‘url‘] = response.url

item[‘brand‘] = response.meta[‘brand‘] ###

item[‘treeurl‘] = response.meta[‘treeurl‘] ###

item[‘brandurl‘] = s_second[0][6] ##changchengh5,benchieji

item[‘brandmodel4‘] = s_second[0][4] #"哈弗H5" "奔驰E级"

item[‘brandmodel5‘] = s_second[0][5] ###

item[‘version‘] = s_second[0][1] #"经典版 2.0T 手动 两驱 精英型",

item[‘image‘] = s_second[0][2]

item[‘cyear‘] = s_second[0][7]

item[‘ctype‘] = s_second[0][12] #"SUV"

item[‘color‘] = s_second[0][13]

item[‘price1‘] = s_second[1][0] # 厂家指导价

item[‘price2‘] = s_second[1][1] # 商家报价

item[‘displacement‘] = s_second[1][5] #"2.0", 排量(L)

item[‘shiftgears‘] = s_second[1][6] # "6"

item[‘shifttype‘] = s_second[1][7] # "手动"

item[‘clength‘] = s_second[2][0] # 长宽高,为了清楚表示,加了前缀c

item[‘cwidth‘] = s_second[2][1] # 长宽高,为了清楚表示,加了前缀c

item[‘cheight‘] = s_second[2][2] # 长宽高,为了清楚表示,加了前缀c

item[‘wheelbase‘] = s_second[2][3] #轴距

item[‘mingrounddistance‘] = s_second[2][8] #最小离地间隙

item[‘motor‘] = s_second[3][1] # 发动机型号

item[‘intaketype‘] = s_second[3][5] # 进气形式

item[‘maxhorsepower‘] = s_second[3][13] # 最大马力(Ps)

item[‘maxpower‘] = s_second[3][14] # 最大功率(kW)

item[‘maxrpm‘] = s_second[3][15] # 最大功率转速(rpm)

item[‘oiltype‘] = s_second[3][19] # 燃料类型

item[‘oilsupply‘] = s_second[3][21] # 供油方式

item[‘tankvolume‘] = s_second[3][22] # 燃油箱容积(L)

item[‘drivetype‘] = s_second[5][6] # 驱动方式

item[‘braketype‘] = s_second[5][5] # 驻车制动类型

item[‘frontwheel‘] = s_second[7][0] # 前轮

item[‘backwheel‘] = s_second[7][1] # 后轮

yield item

前面只是从页面层次去parse,没叙述item,因为这个也简单,没啥需要叙述的。其定义代码为

class BitautoCarItem(scrapy.Item):

carid = scrapy.Field()

url = scrapy.Field()

treeurl = scrapy.Field()

brand = scrapy.Field() ###

brandurl = scrapy.Field() ###

brandmodel4 = scrapy.Field() #"哈弗H5"

brandmodel5 = scrapy.Field() #"哈弗H5"

version = scrapy.Field() #"经典版 2.0T 手动 两驱 精英型",

image = scrapy.Field()

cyear = scrapy.Field()

ctype = scrapy.Field() #"SUV"

color = scrapy.Field()

price1 = scrapy.Field() # 厂家指导价

price2 = scrapy.Field() # 商家报价

displacement = scrapy.Field() # "2.0", 排量(L)

shiftgears = scrapy.Field() # "6"

shifttype = scrapy.Field() # "手动"

clength = scrapy.Field() # 长宽高,为了清楚表示,加了前缀c

cwidth = scrapy.Field() # 长宽高,为了清楚表示,加了前缀c

cheight = scrapy.Field() # 长宽高,为了清楚表示,加了前缀c

wheelbase = scrapy.Field() #轴距

mingrounddistance = scrapy.Field() #最小离地间隙

motor = scrapy.Field() # 发动机型号

intaketype = scrapy.Field() # 进气形式

maxhorsepower = scrapy.Field() # 最大马力(Ps)

maxpower = scrapy.Field() # 最大功率(kW)

maxrpm = scrapy.Field() # 最大功率转速(rpm)

oiltype = scrapy.Field() # 燃料类型

oilsupply = scrapy.Field() # 供油方式

tankvolume = scrapy.Field() # 燃油箱容积(L)

drivetype = scrapy.Field() # 驱动方式

braketype = scrapy.Field() # 驻车制动类型

frontwheel = scrapy.Field() # 前轮胎规格

backwheel = scrapy.Field() # 后轮胎规格

至于Pipeline,随意写即可,也是套路而已,mongodb的pipeline如下

class MongoDBPipeline(object):

def __init__(self):

connection = MongoClient(

settings[‘MONGODB_SERVER‘],

settings[‘MONGODB_PORT‘]

)

db=connection[settings[‘MONGODB_DB‘]]

self.collection = db[settings[‘MONGODB_COLLECTION‘]]

def process_item(self, item, spider):

self.collection.insert(dict(item))

也可以是关系型数据库,如postgresql

class CarsPgPipeline(object):

def __init__(self):

#reload(sys)

#sys.setdefaultencoding(‘utf-8‘)

self.connection = psycopg2.connect(

database= settings[‘POSTGRES_DB‘],

user= settings[‘POSTGRES_USER‘],

password= settings[‘POSTGRES_PW‘],

host= settings[‘POSTGRES_SERVER‘],

port= settings[‘POSTGRES_PORT‘],

)

self.cursor = self.connection.cursor()

def process_item(self,item,spider):

if instance(item, BitautoCarItem):

_sql = """INSERT INTO BitautoCar(carid,url,treeurl,brand,brandurl,brandmodel4,brandmodel5,version,image,cyear,ctype,color,price1,price2,displacement,shiftgears,shifttype,clength,cwidth,cheight,wheelbase,mingrounddistance,motor,intaketype,maxhorsepower,maxpower,maxrpm,oiltype,oilsupply,tankvolume,drivetype,braketype,frontwheel,backwheel) VALUES (‘%s‘,‘%s‘,‘%s‘,‘%s‘,‘%s‘,‘%s‘,‘%s‘,‘%s‘,‘%s‘,‘%s‘,‘%s‘,‘%s‘,‘%s‘,‘%s‘,‘%s‘,‘%s‘,‘%s‘,‘%s‘,‘%s‘,‘%s‘,‘%s‘,‘%s‘,‘%s‘,‘%s‘,‘%s‘,‘%s‘,‘%s‘,‘%s‘,‘%s‘,‘%s‘,‘%s‘,‘%s‘,‘%s‘,‘%s‘);"""%( item[‘carid‘],item[‘url‘],item[‘treeurl‘],item[‘brand‘],item[‘brandurl‘],item[‘brandmodel4‘],item[‘brandmodel5‘],item[‘version‘],item[‘image‘],item[‘cyear‘],item[‘ctype‘],item[‘color‘],item[‘price1‘],item[‘price2‘],item[‘displacement‘],item[‘shiftgears‘],item[‘shifttype‘],item[‘clength‘],item[‘cwidth‘],item[‘cheight‘],item[‘wheelbase‘],item[‘mingrounddistance‘],item[‘motor‘],item[‘intaketype‘],item[‘maxhorsepower‘],item[‘maxpower‘],item[‘maxrpm‘],item[‘oiltype‘],item[‘oilsupply‘],item[‘tankvolume‘],item[‘drivetype‘],item[‘braketype‘],item[‘frontwheel‘],item[‘backwheel‘])

try:

self.cursor.execute(self.cursor.mogrify(_sql) )

self.connection.commit()

except Exception, e:

self.connection.rollback()

print "Error: %s" % e

return item

项目setting部分

BOT_NAME = ‘Car_spider‘

SPIDER_MODULES = [‘Car_spider.spiders‘]

NEWSPIDER_MODULE = ‘Car_spider.spiders‘

ITEM_PIPELINES = {

‘Car_spider.pipelines.CarsPgPipeline‘ : 1000,

}

MONGODB_SERVER = ‘localhost‘

MONGODB_PORT = 27017

MONGODB_DB = ‘car‘

MONGODB_COLLECTION = ‘kache360‘ #‘bitantotest‘

POSTGRES_SERVER = ‘localhost‘

POSTGRES_PORT = 5432

POSTGRES_DB = ‘yourdb‘

POSTGRES_USER = ‘yourname‘

POSTGRES_PW = ‘123456‘

ROBOTSTXT_OBEY = True

DOWNLOAD_DELAY = 3

RANDOMIZE_DOWNLOAD_DELAY = True

标签:

原文地址:http://www.cnblogs.com/xiaoyy3/p/5980334.html