what has been done:

This paper proposed a novel Deep Supervised Hashing method to learn a compact similarity-presevering binary code for the huge body of image data.

Data sets:

CIFAR-10: 60,000 32*32 belonging to 10 mutually exclusively categories(6000 images per category)

NUS-WIDE: 269,648 from Flickr, warpped to 64*64

content based image retrieval: visually similar or semantically similar.

Traditional method: calculate the distance between the query image and the database images.

Problem: time and memory

Solution: hashing methods(map image to compact binary codes that approximately preserve the data structure in the original space)

Problem: performace depends on the features used, more suitable for dealing with the visiual similarity search rather than the sematically similarity search.

Solution: CNNs, the CNNs successful applications of CNNs in various tasks imply that the feature learned by CNNs can well capture the underlying sematic structure of images in spite of significant appearance variations.

Related works:

Locality Sensitive Hashing(LSH):use random projections to produce hashing bits

cons: requires long codes to achieve satisfactory performance.(large memory)

data-dependent hashing methods: unsupervised vs supervised

unsupervised methods: only make use of unlabelled training data to lean hash functions

- spectral hashing(SH): minimizes the weighted hamming distance of image pairs

- Iterative Quantization(ITQ): minimize the quantization error on projected image descriptors so as to allievate the information loss

supervised methods: take advantage of label inforamtion thus can preserve semantic similarity

- CCA-ITQ: an extension of iterative quantization

- predictable discriminative binary code: looks for hypeplanes that seperate categories with large margin as hash function.

- Minimal Loss Hashing(MLH): optimize upper bound of a hinge-like loss to learn the hash functions

problem: the above methods use linear projection as hash functions and can only deal with linearly seperable data.

solution: supervised hashing with kernels(KSH) and Binary Reconstructive Embedding(BRE).

Deep hashing: exploits a non-linear deep networks to produce binary code.

Problem : most hash methods relax the binary codes to real-values in optimizations and quantize the model outputs to produce binary codes. However there is no guarantee that the optimal real-valued codes are still optimal after quantization .

Solution: DIscrete Graph Hashing(DGH) and Supervided Discrete Hashing(DSH) are proposed to directly optimize the binary codes.

Problem : Use hand crafted feature and cannot capture the semantic information.

Solution: CNNs base hashing method

Our goal: similar images should be encoded to similar binary codes and the binary codes should be computed efficiently.

Loss function:

Relaxation:

Implementation details:

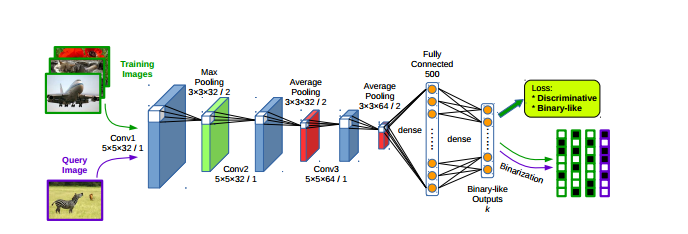

Network structure:

3*卷积层:

3*池化层:

2*全连接层:

Training methodology:

- generate images pairs online by exploiting all the image pairs in each mini-batch. Allivate the need to store the whole pair-wise similarity matrix, thus being scalable to large-scale data-sets.

- Fine-tune vs Train from scratch

Experiment:

CIFAR-10

GIST descriptors for conventional hashing methods

NUS-WIDE

225-D normalized block-wise color moment features

Evalutaion Metrics

mAP: mean Average Precision

precision-recall curves(48-bit)

mean precision within Hamming radius 2 for different code lengths

Network ensembles?

Comparison with state-of-the-art method

CNNH: trainin the model to fit pre-computed discriminative binary code. binary code generation and the network learning are isolated

CLBHC: train the model with a binary-line hidden layer as features for classification, encoding dissimilar images to similar binary code would not be punished.

DNNH: used triplet-based constraints to describe more complex semantic relations, training its networks become more diffucult due to the sigmoid non-linearlity and the parameterized piece-wise threshold function used in the output layer.

Combine binary code generation with network learning

Comparision of Encoding Time