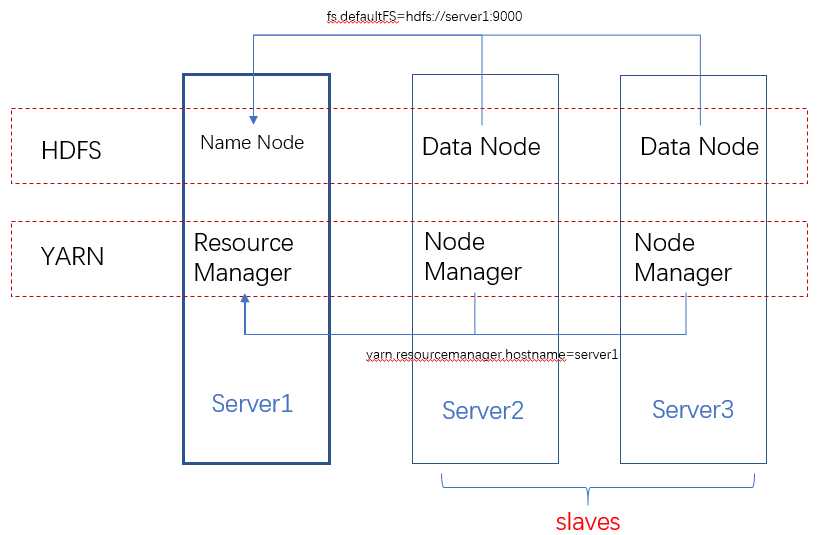

集群架构

Hadoop的安装其实就是HDFS和YARN集群的配置,从下面的架构图可以看出,HDFS的每一个DataNode都需要配置NameNode的位置。同理YARN中的每一个NodeManager都需要配置ResourceManager的位置。

NameNode和ResourceManager的作用如此重要,在集群环境下,他们存在单点问题吗?在Hadoop1.0中确实存在,不过在2.0中已经得到解决,具体参考:

https://www.ibm.com/developerworks/cn/opensource/os-cn-hadoop-name-node/index.html

配置

因为每台机器上的配置都是一样的,所以配置时一般是配置好一台服务器,然后复制到其他服务器上。

JAVA_HOME

在hadoop-env.sh文件中配置JAVA_HOME.

core-site.xml

配置hdfs文件系统,通过fs.defaultFS配置hdfs的NameNode节点。

<property> <name>fs.defaultFS</name> <value>hdfs://{hdfs-name-node-server-host}:9000</value> </property>

通过hadoop.tmp.dir配置hadoop运行时产生文件的存储目录

<property> <name>hadoop.tmp.dir</name> <value>/usr/local/hadoop-data/tmp</value> </property>

hdfs-site.xml

配置文件副本数量和second namenode:

<property> <name>dfs.replication</name> <value>1</value> </property> <property> <name>dfs.secondary.http.address</name> <value>{second-namenode-host}:50090</value> </property>

yarn-site.xml

配置YARN的ResourceManager:

<property> <name>yarn.resourcemanager.hostname</name> <value>{resource-manager-host}</value> </property>

和reducer获取数据的方式:

<property> <name>yarn.nodemanager.aux-services</name> <value>mapreduce_shuffle</value> </property>

最后记得把hadoop的bin和sbin目录添加到环境变量中:

export HADOOP_HOME=/user/local/hadoop-2.6.5 export PATH=$PATH:$JAVA_HOME/bin:$HADOOP_HOME/bin:$HADOOP_HOME/sbin

格式化namenode

hdfs namenode -format (hadoop namenode -format)

启动Hadoop

先启动HDFS的NameNode:

hadoop-daemon.sh start datanode

在集群的DataNode上启动DataNode:

hadoop-daemon.sh start datanode

查看启动结果

[root@server1 ~]# jps 2111 Jps 2077 NameNode

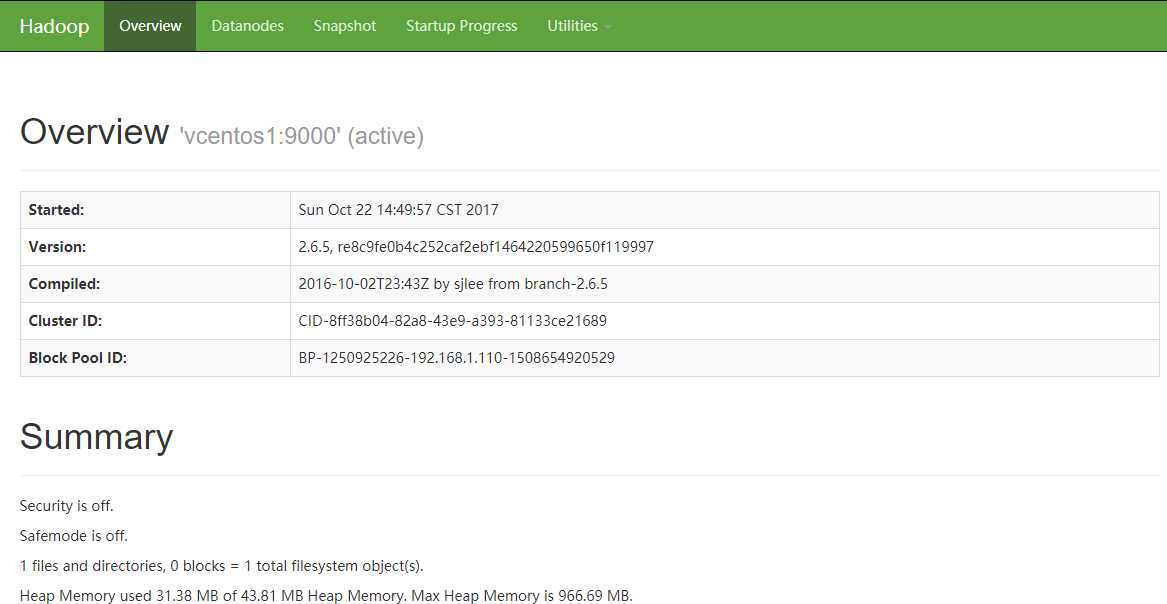

如果启动成功,通过http://server1:50070,可以看到类似下面的页面:

再启动YARN

[root@vcentos1 sbin]# start-yarn.sh starting yarn daemons starting resourcemanager, logging to /usr/local/hadoop-2.6.5/logs/yarn-root-resourcemanager-vcentos1.out vcentos3: starting nodemanager, logging to /usr/local/hadoop-2.6.5/logs/yarn-root-nodemanager-vcentos3.out vcentos2: starting nodemanager, logging to /usr/local/hadoop-2.6.5/logs/yarn-root-nodemanager-vcentos2.out [root@server1 sbin]# jps 2450 ResourceManager 2516 Jps 2077 NameNode

hadoop下的sbin目录下的文件是用来管理hadoop服务的:

hadoop-dameon.sh:用来单独启动namenode或datanode;

start/stop-dfs.sh:配合/etc/hadoop/slaves,可以批量启动/关闭NameNode和集群中的其他DataNode;

start/stop-yarn.sh:配合/etc/hadoop/slaves,可以批量启动/关闭ResourceManager和集群中的其他NodeManager;

bin目录下的文件可以提供hdfs、yarn和mapreduce服务:

[root@server1 bin]# hadoop fs Usage: hadoop fs [generic options] [-appendToFile <localsrc> ... <dst>] [-cat [-ignoreCrc] <src> ...] [-checksum <src> ...] [-chgrp [-R] GROUP PATH...] [-chmod [-R] <MODE[,MODE]... | OCTALMODE> PATH...] [-chown [-R] [OWNER][:[GROUP]] PATH...] [-copyFromLocal [-f] [-p] [-l] <localsrc> ... <dst>] [-copyToLocal [-p] [-ignoreCrc] [-crc] <src> ... <localdst>] [-count [-q] [-h] <path> ...] [-cp [-f] [-p | -p[topax]] <src> ... <dst>] [-createSnapshot <snapshotDir> [<snapshotName>]] [-deleteSnapshot <snapshotDir> <snapshotName>] [-df [-h] [<path> ...]] [-du [-s] [-h] <path> ...] [-expunge] [-get [-p] [-ignoreCrc] [-crc] <src> ... <localdst>] [-getfacl [-R] <path>] [-getfattr [-R] {-n name | -d} [-e en] <path>] [-getmerge [-nl] <src> <localdst>] [-help [cmd ...]] [-ls [-d] [-h] [-R] [<path> ...]] [-mkdir [-p] <path> ...] [-moveFromLocal <localsrc> ... <dst>] [-moveToLocal <src> <localdst>] [-mv <src> ... <dst>] [-put [-f] [-p] [-l] <localsrc> ... <dst>] [-renameSnapshot <snapshotDir> <oldName> <newName>] [-rm [-f] [-r|-R] [-skipTrash] <src> ...] [-rmdir [--ignore-fail-on-non-empty] <dir> ...] [-setfacl [-R] [{-b|-k} {-m|-x <acl_spec>} <path>]|[--set <acl_spec> <path>]] [-setfattr {-n name [-v value] | -x name} <path>] [-setrep [-R] [-w] <rep> <path> ...] [-stat [format] <path> ...] [-tail [-f] <file>] [-test -[defsz] <path>] [-text [-ignoreCrc] <src> ...] [-touchz <path> ...] [-usage [cmd ...]]

参考:

最新安装文档:http://hadoop.apache.org/docs/current/hadoop-project-dist/hadoop-common/ClusterSetup.html

2.6.5安装文档:http://hadoop.apache.org/docs/r2.6.5/hadoop-project-dist/hadoop-common/SingleCluster.html

Secondary Namenode:http://blog.madhukaraphatak.com/secondary-namenode---what-it-really-do/