标签:strong while 载器 去除 关闭 port init 优先 响应

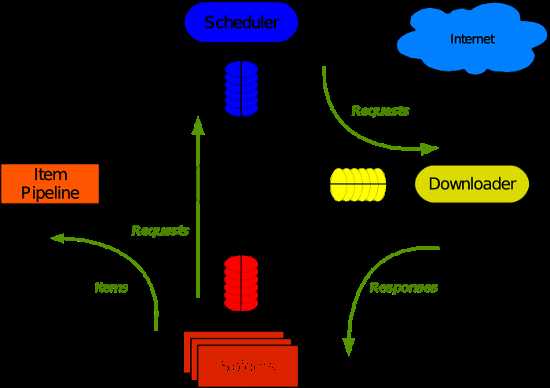

Scrapy 使用了 Twisted异步网络库来处理网络通讯。整体架构大致如下

Scrapy主要包括了以下组件:

Scrapy运行流程大概如下:

所用到的三个对象:

getpage:内封装socket对象,帮下载url的html页面,可自动回调

defer:内包装socket对象,不调用,所以不会自己回调,需手动终止,用于整个爬虫的任务终止

reactor:封装io多路复用,开启循环

通过DeferredList控制所有爬虫任务,结束时stop终止reactor的监听

运行流程大概如下:

1.crawl将spider中的start_requests中返回的的起始url生成器转化为迭代器,将请求添加到调度器。

2.通过reactor调用_next_request方法,即从调度器取出request对象并发送请求

3.页面下载完成,回调get_response_callback,调用用户spider中定义的parse方法,如果parse有yield request对象,将新请求添加到调度器。

如未达到最大并发数,可以再去调度器中获取Request。

4.当调度器的request对象没有了,即是爬虫任务结束,此时可关闭Deferred和reactor。

代码如下:

from twisted.internet import defer

from twisted.internet import reactor

from twisted.web.client import getPage

class Request(object):

def __init__(self, url, callback):

self.url = url

self.callback = callback

class HttpResponse(object):

def __init__(self,content,request):

self.content = content

self.request = request

self.url = request.url

self.text = str(content,encoding=‘utf-8‘)

class ChoutiSpider(object):

name = "chouti"

def start_requests(self):

start_urls = [‘http://dig.chouti.com‘]

for url in start_urls:

yield Request(url, callback=self.parse)

def parse(self, response):

# yield Request("http://cn.bing.com",callback=self.parse)

print(response)

import queue

Q = queue.Queue()

class Engine(object):

def __init__(self):

self._close = None

self.max = 5

self.crawling = []

def get_response_callback(self,content,request):

self.crawling.remove(request)

rep = HttpResponse(content, request)

result = request.callback(rep) #调用parse方法 如果parse中有yield 则result是一个生成器

import types

if isinstance(result, types.GeneratorType):

for req in result:

Q.put(req)

def _next_request(self):

#取request对象,并发送请求

if Q.qsize() == 0 and len(self.crawling) == 0:

self._close.callback(None)

return

if len(self.crawling) >= self.max:

return

while len(self.crawling) < self.max:

try:

req = Q.get(block=False)

self.crawling.append(req)

d = getPage(req.url.encode("utf-8"))

# 页面下载完成,get_response_callback,调用用户spider中定义的parse方法,并且将新请求添加到调度器

d.addCallback(self.get_response_callback, req)

# 未达到最大并发数,可以再去调度器中获取Request

d.addCallback(lambda _: reactor.callLater(0, self._next_request))

except Exception as e:

return

@defer.inlineCallbacks

def crawl(self, spider):

start_requests = iter(spider.start_requests())

#将请求添加到调度器

while True:

try:

request = next(start_requests)

Q.put(request)

except StopIteration as e:

break

# 去调度器中取request,并发送请求

# self._next_request()

reactor.callLater(0, self._next_request)

self._close = defer.Deferred()

yield self._close

spider = ChoutiSpider()

_active = set()

engine = Engine()

d = engine.crawl(spider)

_active.add(d)

dd = defer.DeferredList(_active)

dd.addBoth(lambda a:reactor.stop())

reactor.run()

标签:strong while 载器 去除 关闭 port init 优先 响应

原文地址:https://www.cnblogs.com/dominik/p/10011156.html