标签:版本过低 image save ble next tps lse png python

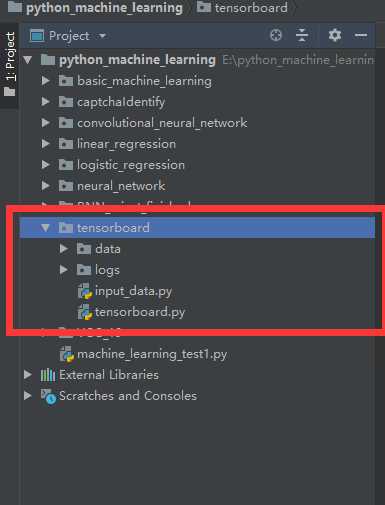

1.工程目录

2.data、input_data.py的导入

在tensorflow更新之后可以进行直接的input_data的导入

# from tensorflow.examples.tutorials.mnist import input_data

链接:https://pan.baidu.com/s/1EBNyNurBXWeJVyhNeVnmnA

提取码:4nnl

3.神经网络训练算法tensorboard.py

import tensorflow as tf

import input_data

max_steps = 1000

learning_rate = 0.001

dropout = 0.9

log_dir = ‘logs/‘

mnist = input_data.read_data_sets(‘data‘, one_hot=True)

sess = tf.InteractiveSession()

with tf.name_scope(‘input‘):

x = tf.placeholder(tf.float32, [None, 784], name=‘x-input‘)

y_ = tf.placeholder(tf.float32, [None, 10], name=‘y-input‘)

with tf.name_scope(‘input_reshape‘):

image_shaped_input = tf.reshape(x, [-1, 28, 28, 1])

tf.summary.image(‘input‘, image_shaped_input, 10)

def weight_variable(shape):

initial = tf.truncated_normal(shape, stddev=0.1)

return tf.Variable(initial)

def bias_variable(shape):

initial = tf.constant(0.1, shape=shape)

return tf.Variable(initial)

def variable_summaries(var):

with tf.name_scope(‘summaries‘):

mean = tf.reduce_mean(var)

tf.summary.scalar(‘mean‘, mean)

with tf.name_scope(‘stddev‘):

stddev = tf.sqrt(tf.reduce_mean(tf.sqrt(var - mean)))

tf.summary.scalar(‘stddev‘, stddev)

tf.summary.scalar(‘max‘, tf.reduce_max(var))

tf.summary.scalar(‘min‘, tf.reduce_min(var))

tf.summary.histogram(‘histogram‘, var)

def nn_layer(input_tensor, input_dim, output_dim, layer_name, act=tf.nn.relu):

with tf.name_scope(layer_name):

with tf.name_scope(‘weights‘):

weights = weight_variable([input_dim, output_dim])

variable_summaries(weights)

with tf.name_scope(‘biases‘):

biases = bias_variable([output_dim])

variable_summaries(biases)

with tf.name_scope(‘Wx_plus_b‘):

preactivate = tf.matmul(input_tensor, weights) + biases

tf.summary.histogram(‘pre_activations‘, preactivate)

activations = act(preactivate, name=‘activation‘)

tf.summary.histogram(‘activations‘, activations)

return activations

hidden1 = nn_layer(x, 784, 500, ‘layer1‘)

with tf.name_scope(‘dropout‘):

keep_prob = tf.placeholder(tf.float32)

tf.summary.scalar(‘dropout_keep_probability‘, keep_prob)

dropped = tf.nn.dropout(hidden1, keep_prob)

y = nn_layer(dropped, 500, 10, ‘layer2‘, act=tf.identity)

with tf.name_scope(‘cross_entropy‘):

diff = tf.nn.softmax_cross_entropy_with_logits(logits=y, labels=y_)

with tf.name_scope(‘total‘):

cross_entropy = tf.reduce_mean(diff)

tf.summary.scalar(‘cross entropy‘, cross_entropy)

with tf.name_scope(‘train‘):

train_step = tf.train.AdamOptimizer(learning_rate).minimize(cross_entropy)

with tf.name_scope(‘accurecy‘):

with tf.name_scope(‘correct_prediction‘):

correct_prediction = tf.equal(tf.argmax(y, 1), tf.argmax(y_, 1))

with tf.name_scope(‘accuracy‘):

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

tf.summary.scalar(‘accuracy‘, accuracy)

merged = tf.summary.merge_all()

train_writer = tf.summary.FileWriter(log_dir + ‘/train‘, sess.graph)

test_writer = tf.summary.FileWriter(log_dir + ‘/test‘)

tf.global_variables_initializer().run()

def feed_dict(train):

if train:

xs, ys = mnist.train.next_batch(100)

k = dropout

else:

xs, ys = mnist.test.images, mnist.test.labels

k = 1.0

return {x: xs, y_: ys, keep_prob: k}

saver = tf.train.Saver()

for i in range(max_steps):

if i % 10 == 0:

summary, acc = sess.run([merged, accuracy], feed_dict=feed_dict(False))

test_writer.add_summary(summary, i)

print(‘Accuracy at step %s: %s‘ % (1, acc))

else:

if i % 100 == 99:

run_options = tf.RunOptions(trace_level=tf.RunOptions.FULL_TRACE)

run_metadata = tf.RunMetadata()

summary, _ = sess.run([merged, train_step], feed_dict=feed_dict(True))

train_writer.add_run_metadata(run_metadata, ‘step%03d‘ % i)

train_writer.add_summary(summary, 1)

saver.save(sess, log_dir + ‘model.ckpt‘, i)

print(‘Adding run metadata for ‘, i)

else:

summary, _ = sess.run([merged, train_step], feed_dict=feed_dict(True))

train_writer.add_summary(summary, i)

train_writer.close()

test_writer.close()

4.在本地训练完成之后可以进行tensorboard可视化

在terminal窗口输入

tensorboard --logdir "tensorboard/logs" --port=8082

在服务器运行之后访问

http://localhost:8082

ps:可能遇到的问题:

1.浏览器在访问 localhost:8082 时显示空白:

产生原因:tensorflow版本过低

解决办法:更新tensorflow

pip3 install --upgrade tensorflow

更新中可能遇到的问题:在某个盘需要管理员权限

解决办法:打开此电脑-->右键属性(待更改属性的盘)-->安全-->(Users)编辑-->将修改打勾之后应用

标签:版本过低 image save ble next tps lse png python

原文地址:https://www.cnblogs.com/CK85/p/10278259.html